You can check the problem in your implementation by printing the shape of each of the terms. of shape ( As in your implementation ).įinally, we add them and compute their mean using np.mean() over the batch dimension, o = -np.mean( p1 + p2 ) A np.dot will turn them into a array of two elements i.e. Notice that the shapes are still preserved. Using the expression for BCE, p1 = y_true * np.log( y_pred + tf.() ) First, we clip the outputs of our model, setting max to tf.() and min to 1 - tf.(). The expression for Binary Crossentropy is the same as mentioned in the question. Y_pred = np.array( ).reshape( 1, 3 )īce = tf.圜rossentropy( from_logits=False, reduction=tf._OVER_BATCH_SIZE ) Because of this even if the predicted values are equal to the actual values your loss will not be equal to 0. I'll make it clear with the code, import tensorflow as tf Binary cross entropy loss assumes that the values you are trying to predict are either 0 and 1, and not continuous between 0 and 1 as in your example. We need to compute the mean over the 0th axis i.e.

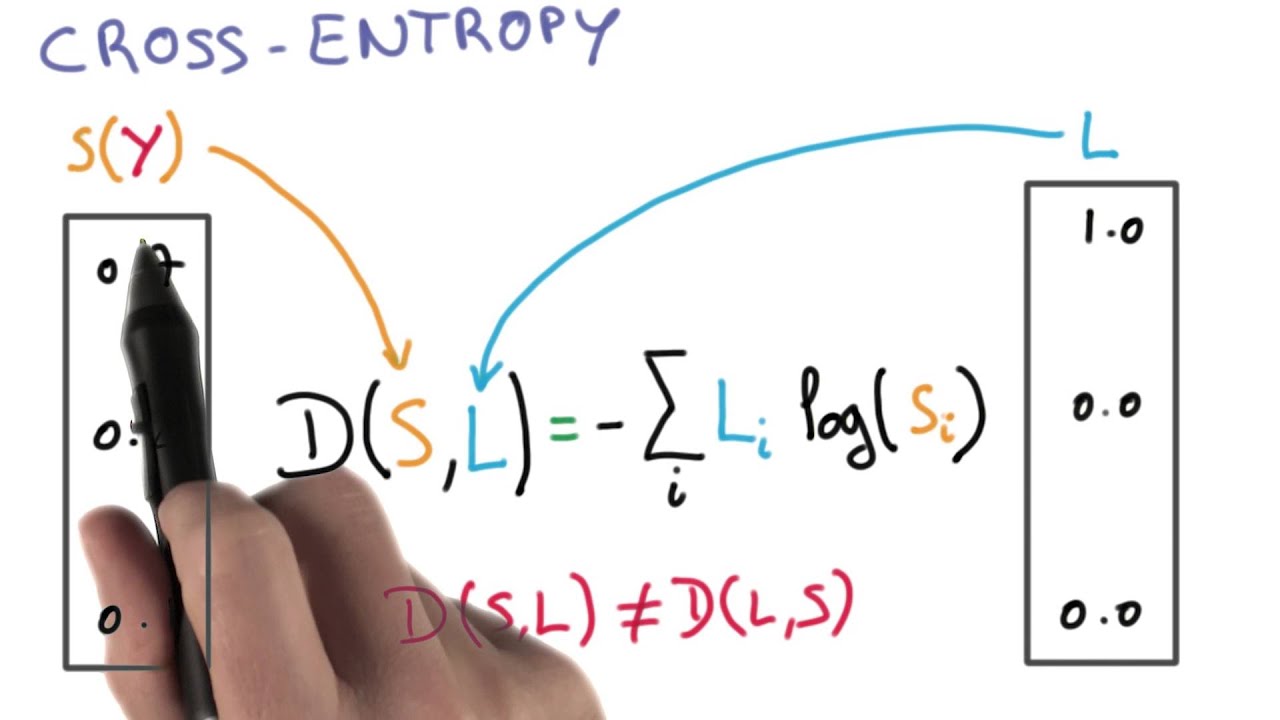

Meaning, our batch size is 1 and the output dims is 3 ( This does not imply that there are 3 classes ). Assume that the shape of our model outputs is. The default argument reduction will most probably have the value Reduction.SUM_OVER_BATCH_SIZE, as mentioned here. For the example above the desired output is 1,0,0,0 for the class dog but the model outputs 0.775, 0.116, 0.039, 0.070. In the constructor of tf.圜rossentropy(), you'll notice, tf.圜rossentropy(įrom_logits=False, label_smoothing=0, reduction=losses_, The purpose of the Cross-Entropy is to take the output probabilities (P) and measure the distance from the truth values (as shown in Figure below).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed